Manage and control your AI security by composing sovereign AI Systems that contain internal and external models, MCP servers, and connections to applications.

Govern, monitor and audit your AI systems by applying AI governance policies that aligns to NIST, OWASP and OMB out of the box.

Deploy AI agents that automatically inherit your system-wide governance and integrate directly with your sovereign AI systems.

An AI System is an abstraction that consolidates your AI models, MCP servers, tool connections, and agent integrations into a single unit. Prediction Guard’s control plane, which is self-hosted in your infrastructure, applies your sovereign configuration of access, auditing, governance, and monitoring to each AI system.

Prediction Guard supports flexible AI System deployment across different environments:

Your deployed Prediction Guard platform provides:

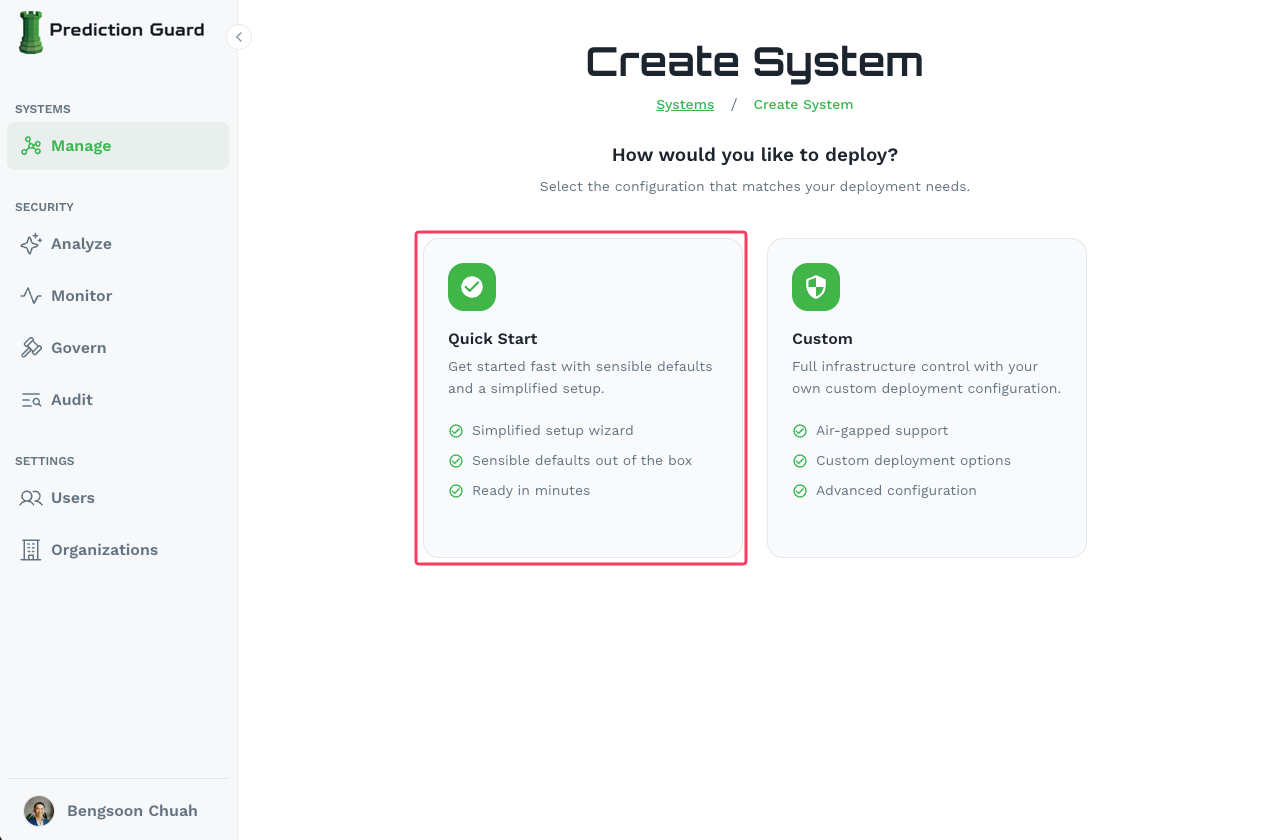

Start by creating your system in the Admin Console:

Navigate to your Admin Console (e.g. admin.predictionguard.com) and log in

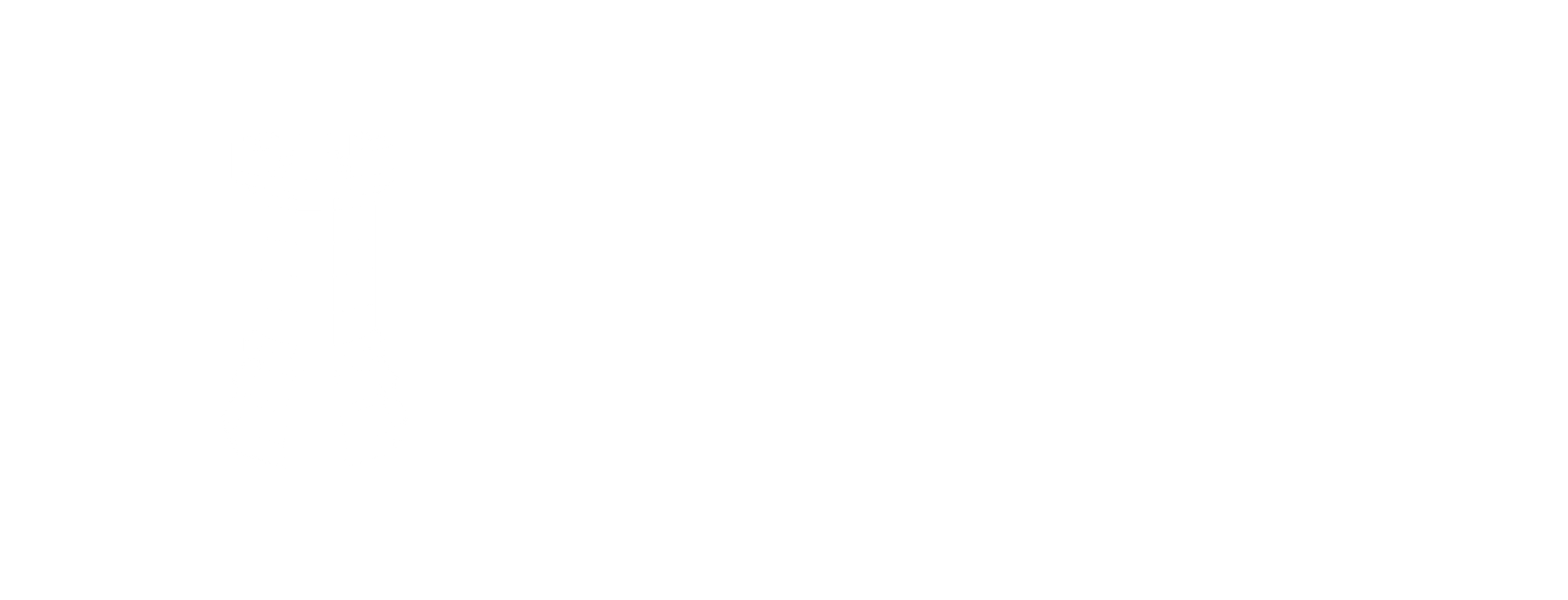

Go to Systems → Manage and click “Create System” in the top-right corner

production, staging)

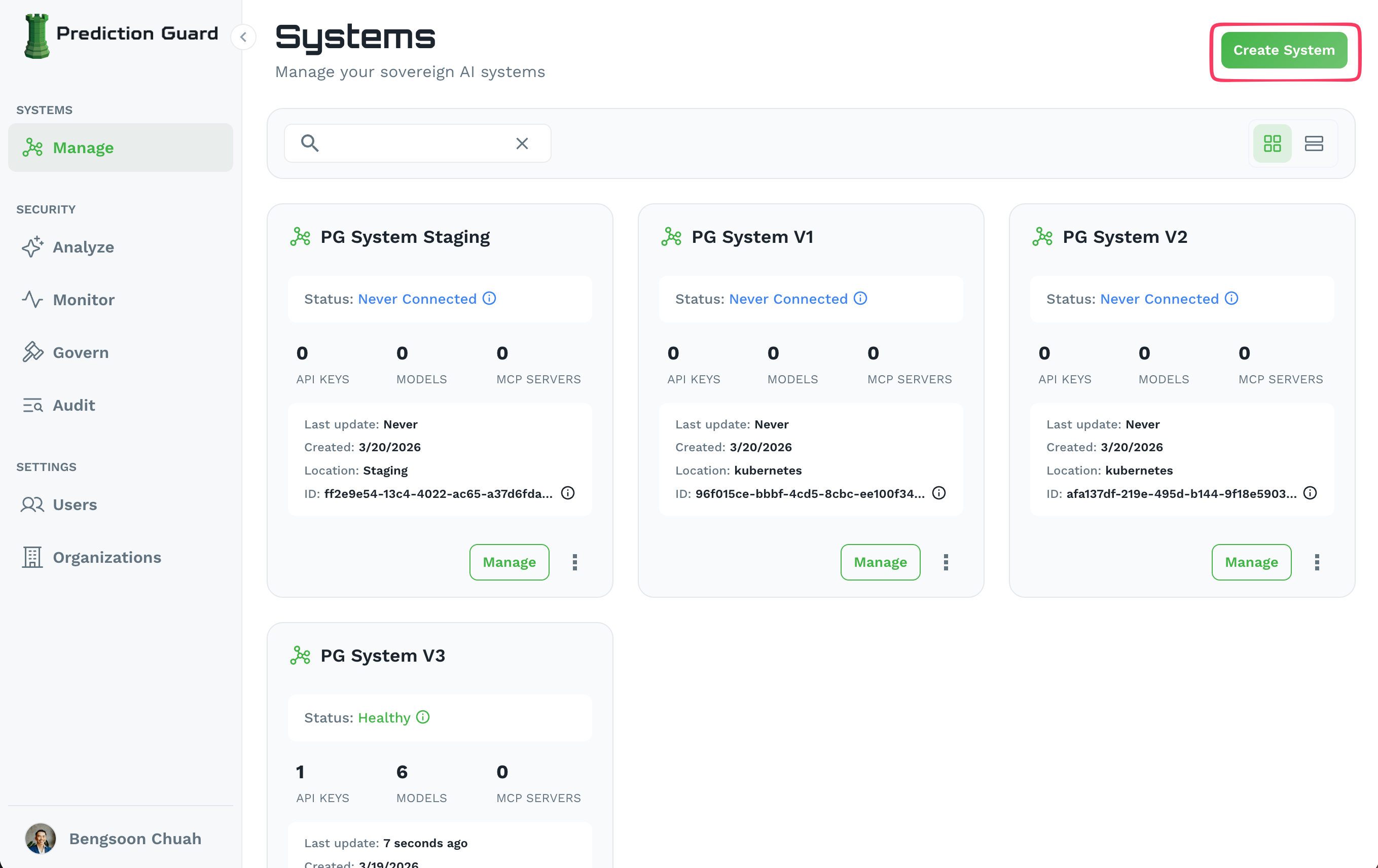

Prediction Guard can be deployed anywhere that fits your needs. Choose the deployment method that works best for your environment:

Deploy in your own data center:

Deploy on major cloud providers:

Deploy in isolated environments. Note that air-gapped deployment requires Custom configuration instead of Quick Start during system creation:

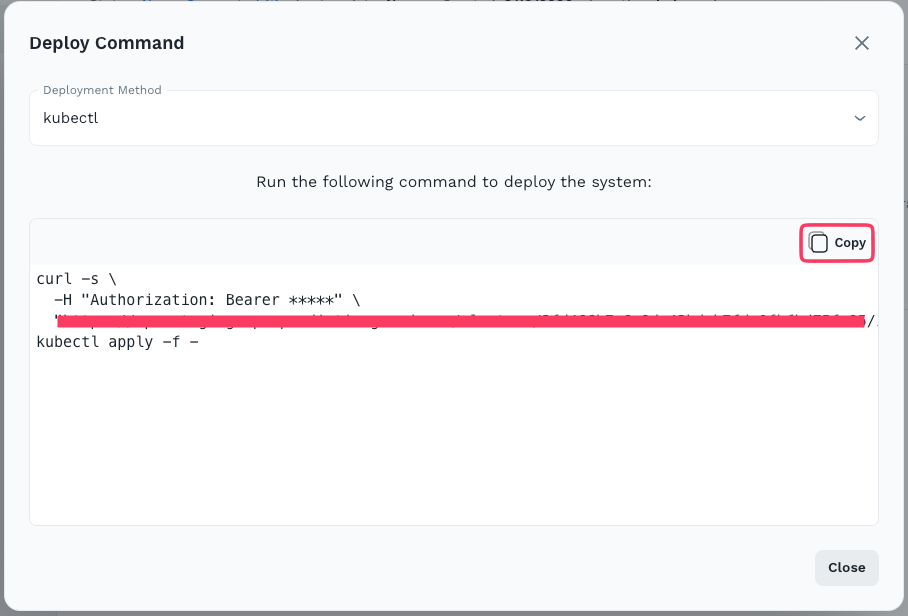

Follow the specific deployment guide for your chosen environment. When ready, click the Deploy button on your system in the Admin Console to get the installation command.

The deployment process will:

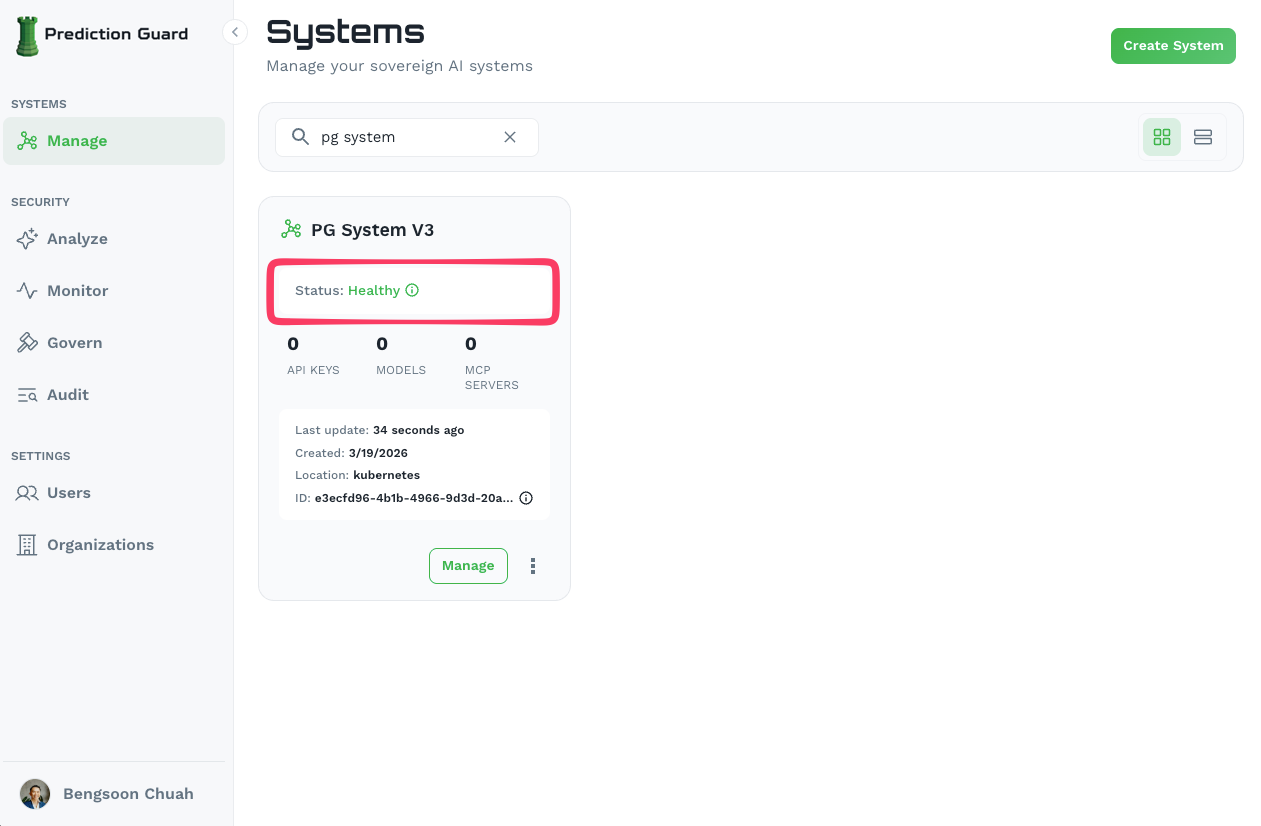

kubectl access to your clusterpredictionguard namespace 4. Verify the deployment — your system will show as Healthy in the Admin Console once complete

4. Verify the deployment — your system will show as Healthy in the Admin Console once complete

Once your system is healthy, connect AI models to start building:

Once your system is deployed and healthy, you can configure it further: