First, create a Google Kubernetes Engine cluster:

If you have not already created your AI system in the Admin Console, follow the Quick Start or the Custom System guide to create your system and generate the installation command.

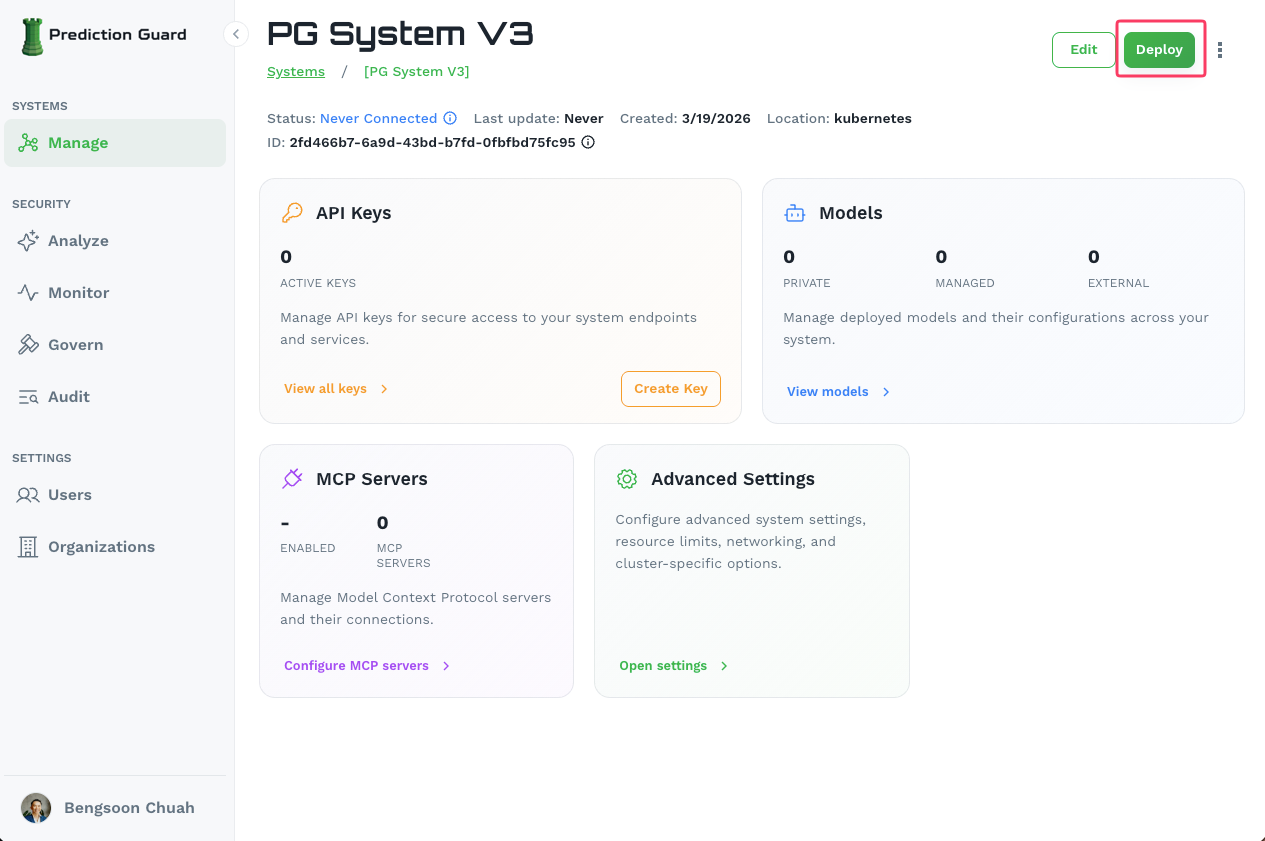

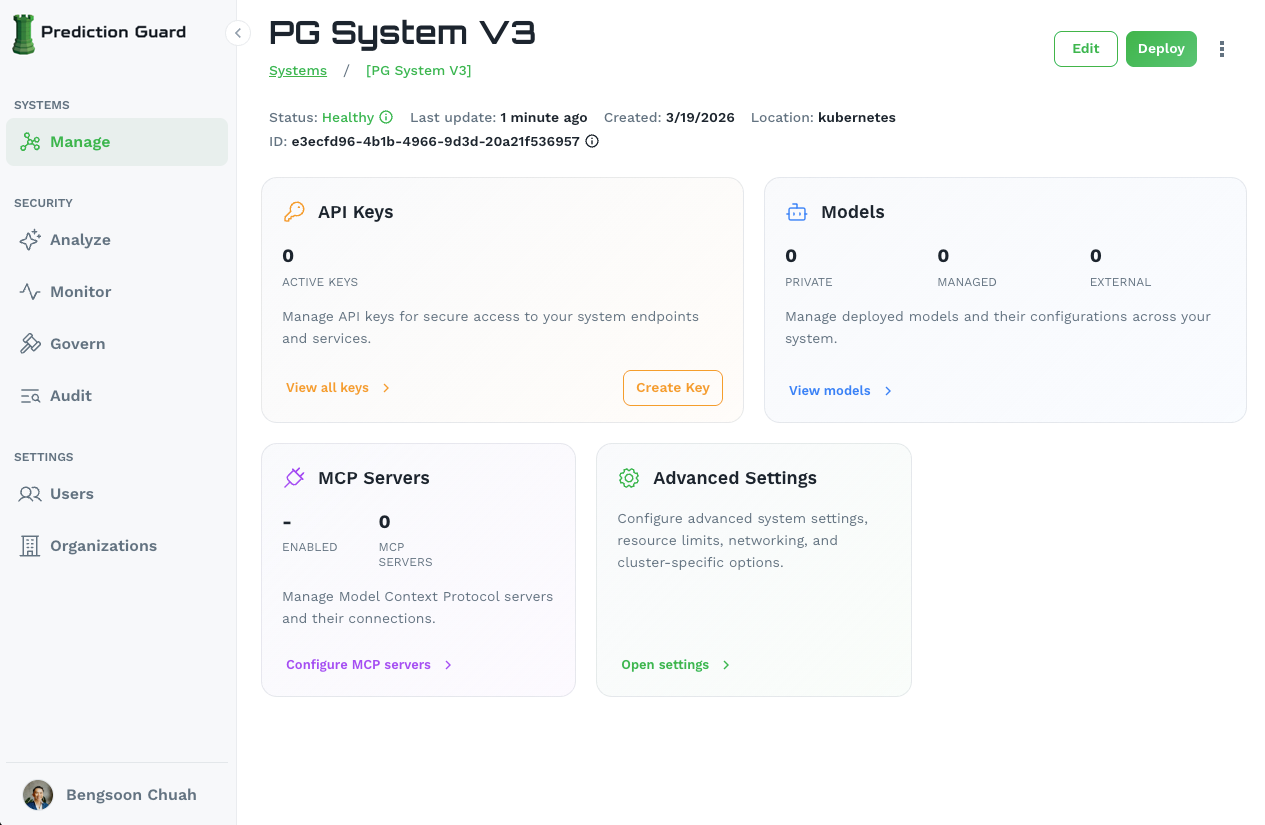

Navigate to your system in the Admin Console and click the Deploy button in the top-right corner of the system management page.

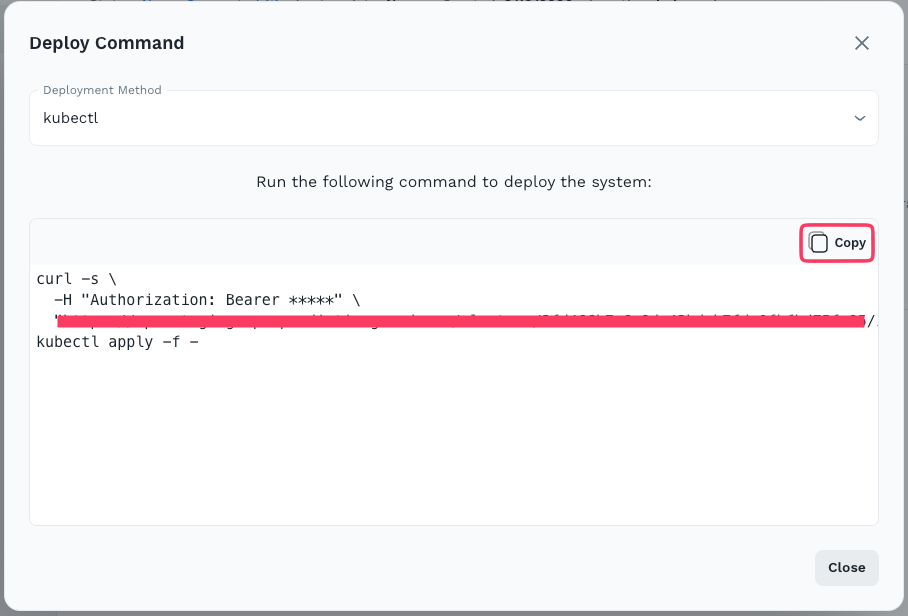

This opens the Deploy Command modal. Select kubectl as the deployment method, then click Copy to copy the generated installation command.

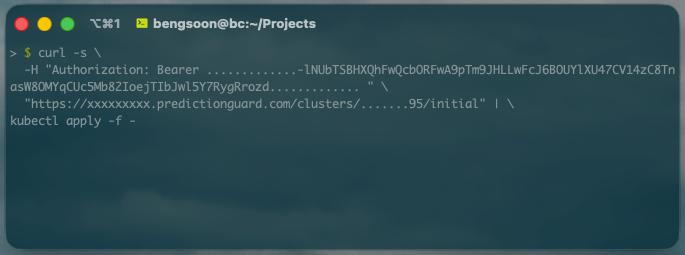

Paste and run the copied command on a machine that has kubectl access to your GKE cluster. The command authenticates with your Prediction Guard instance and bootstraps all services into the predictionguard namespace.

After a few minutes, verify the installation by checking the running pods:

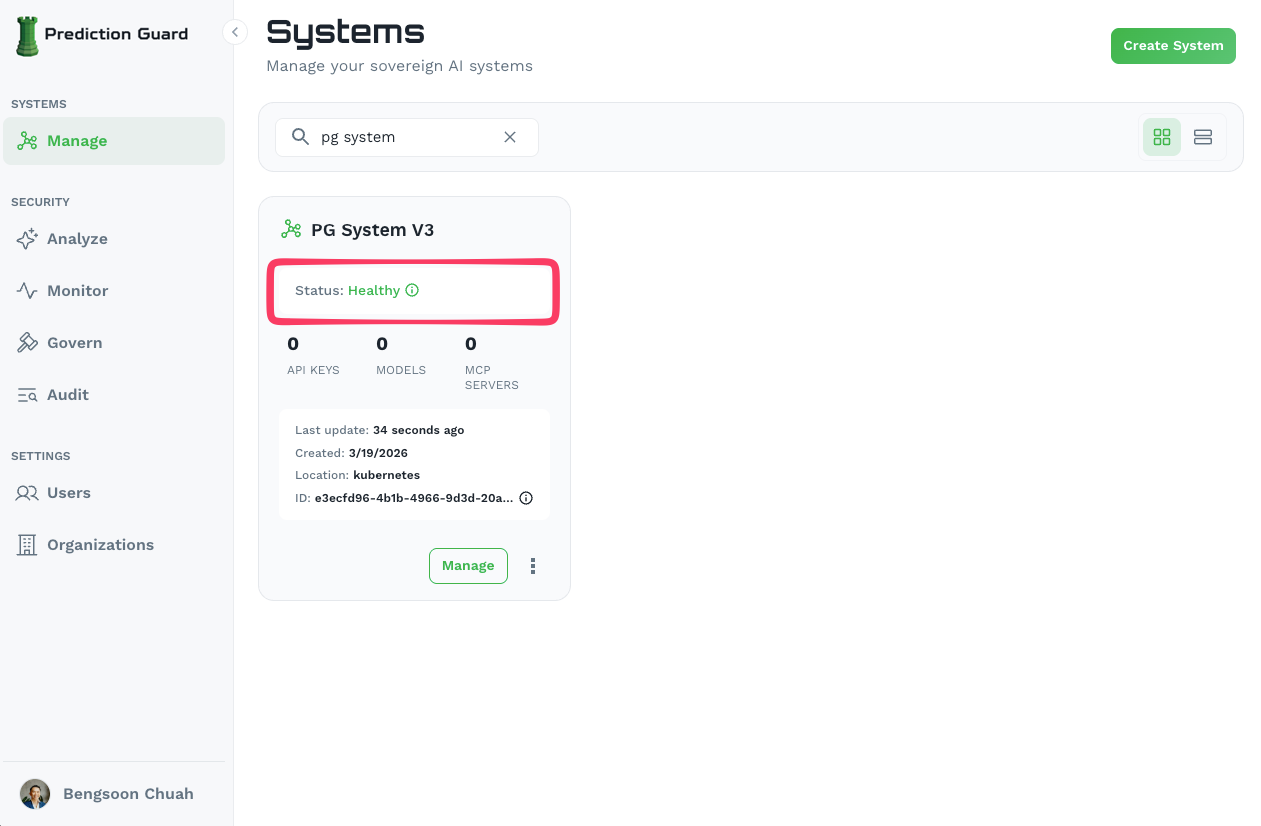

You should see running pods including pg-inside, indicating the system has been successfully installed. The system will also show as Healthy in the Admin Console.

Prediction Guard comes preconfigured for NGINX and a default Ingress which can be enabled on the system within the Edit section of the Systems page. Here you can configure the desired domain names and have NGINX deploy into the predictionguard namespace with preconfigured settings for the Prediction Guard API. Then, simply ensure that your DNS entry is routable to the ingress IP on your Kubernetes cluster or load balancer in GCP.

Once deployed, your AI system is accessible and manageable from the Admin Console.

From here you can:

kubectl to manage cluster resources directlyYour deployment automatically integrates with:

Need help? Contact our support team for assistance with your GCP deployment.